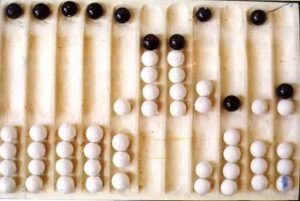

Abacus

The abacus was an early aid for mathematical computations. Its only value is that it aids the memory of the human performing the calculation. A skilled abacus operator can work on addition and subtraction problems at the speed of a person equipped with a hand calculator (multiplication and division are slower).

The abacus is often wrongly attributed to China. In fact, the oldest surviving abacus was used in 300 B.C. by the Babylonians. The abacus is still in use today, principally in the far east.

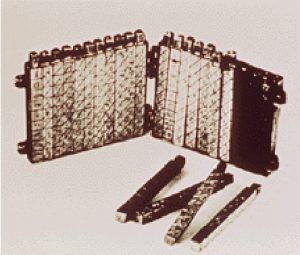

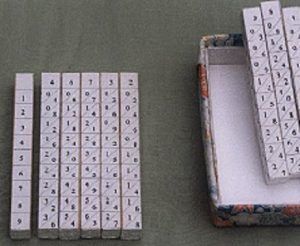

Napier’s Bone

John Napier In 1617 an eccentric (some say mad) Scotsman named John Napier invented logarithms , which are a technology that allows multiplication to be performed via addition. Ex: log 2 x = 5.

The magic ingredient is the logarithm of each operand, which was originally obtained from a printed table. But Napier also invented an alternative to tables, where the logarithm values were carved on ivory sticks which are now called Napier’s Bones.

Slide Rule

Napier’s invention led directly to the slide rule , first built in England in 1632 and still in use in the 1960’s by the NASA engineers of the Mercury, Gemini, and Apollo programs which landed men on the moon.

Calculating Clock

The first gear driven calculating machine to actually be built was probably the calculating clock , so named by its inventor, the German professor Wilhelm Schickard in 1623. This device got little publicity because Schickard died soon afterward in the bubonic plague.

Blaise Pascal

Fig: 6 Digit Pascaline (Cheaper)

In 1642 Blaise Pascal, at age 19, invented the Pascaline as an aid for his father who was a tax collector. Pascal built 50 of this gear-driven one-function calculator (it could only add) but couldn’t sell many because of their exorbitant cost and because they really weren’t that accurate (at that time it was not possible to fabricate gears with the required precision). Up until the present age when car dashboards went digital, the odometer portion of a car’s speedometer used the very same.

Charle’s Babbage

By 1822 the English mathematician Charles Babbage was proposing a steam driven calculating machine the size of a room, which he called the Difference Engine .

Difference Engine

This machine would be able to compute tables of numbers, such as logarithm tables. He obtained government funding for this project due to the importance of numeric tables in ocean navigation. Construction of Babbage’s Difference Engine proved exceedingly difficult and the project soon became the most expensive government funded project up to that point in English history. Ten years later the device was still nowhere near complete, acrimony Babbage.

Analytical Engine

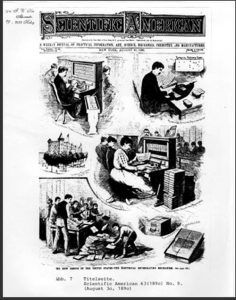

Babbage was not deterred, and by then was on to his next brainstorm, which he called the Analytic Engine . This device, large as a house and powered by 6 steam engines, It was programmable, thanks to the punched card technology of Jacquard. Babbage saw that the pattern of holes in a punch card could be used to represent an abstract idea such as a problem statement or the raw data required for that Hollerith Desk.

Hollerith’s technique was successful and the 1890 census was completed in only 3 years at a savings of 5 million dollars.

IBM

Hollerith built a company, the Tabulating Machine Company which, after a few buyouts, eventually became International Business Machines, known today as IBM.

Hollerith’s Innovation

By using punch cards, Hollerith created a way to store and retrieve information. This was the first type of read and write technology.

Examples of Punch Cards:

Mark I

One early success was the Harvard Mark I computer which was built as a partnership between Harvard and IBM in 1944. This was the first programmable digital computer made in the U.S. But it was not a purely electronic computer. Instead the Mark I was constructed out of switches, relays, rotating shafts, and clutches.

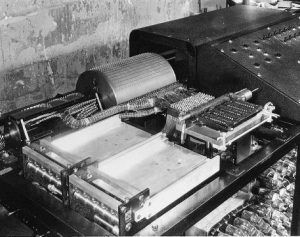

Atanasoff – Berry Computer

One of the earliest attempts to build an all electronic (that is, no gears, cams, belts, shafts, etc.) digital computer occurred in 1937 by J. V. Atanasoff , This machine was the first to store data as a charge on a capacitor, which is how today’s computers store information in their main memory ( DRAM or dynamic RAM ). As far as its inventors were aware, it was also the first to employ binary arithmetic.

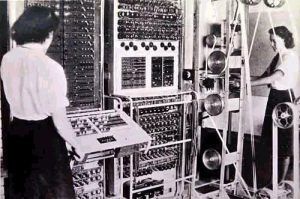

Colussus

The Colossus , built during World War II by Britain for the purpose of breaking the cryptographic codes used by Germany. Britain led the world in designing and building electronic machines dedicated to code breaking, and was routinely able to read coded Germany radio transmissions. Not a general purpose, re-programmable machine.

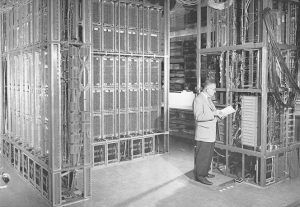

ENIAC

The title of forefather of today’s all electronic digital computers is usually awarded to ENIAC , which stood for Electronic Numerical Integrator and Calculator. ENIAC was built at the University of Pennsylvania between 1943 and 1945 by two professors, John Mauchly and the 24 year old J. Presper Eckert , who got funding from the war department after promising they could build a machine that would replace all the “computers”. ENIAC filled a 20 by 40 foot ENIAC.

EDVAC

It took days to change ENIAC’s program. Eckert and Mauchly’s next teamed up with the mathematician John von Neumann to design EDVAC , which pioneered the stored program . After ENIAC and EDVAC came other computers with humorous names such as ILLIAC, JOHNNIAC , and, of course, MANIAC.

UNIVAC

The UNIVAC computer was the first commercial (mass produced) computer. In the 50’s, UNIVAC (a contraction of “Universal Automatic Computer”) was the household word for “computer” just as “Kleenex” is for “tissue”. UNIVAC was also the first computer to employ magnetic tape.

FIVE GENERATIONS OF COMPUTER

- First Generation Computer (1940-1956)

The first computers used vacuum tubes for circuitry and magnetic drums for memory. They were often enormous and taking up entire room. First generation computers relied on machine language. They were very expensive to operate and in addition to using a great deal of electricity, generated a lot of heat. The first electronic computer was designed at Iowa State between 1939 1942 . The Atanasoff Berry Computer used the binary system(1’s and 0’s). Contained vacuum tubes and stored numbers for calculations by burning holes in paper.

- Second Generation Computer (1956- 1963)

Transistors replaced vacuum tubes and ushered in the second generation of computers. Second generation computers moved from cryptic binary machine language to symbolic. High level programming languages were also being developed at this time, such as early versions of COBOL Second generation.

- Third Generation Computer ( 1964-1971)

The development of the integrated circuit was the hallmark of the third generation of computers. Transistors were miniaturized and placed on siliconchips , called semiconductors. Instead of punched cards and printouts, users interacted with third generation computers through keyboards and monitors and interfaced First Generation Computers. Transistors were replaced by integrated circuits(IC). One IC could replace hundreds of transistors. This made computers even smaller and faster.

- Fourth Generation Computer ( 1971- Present)

The microprocessor brought the fourth generation of computers, as thousands of integrated circuits were built onto a single silicon chip. The Intel 4004 chip, developed in 1971, located all the components of the computer. From the central processing unit and memory to input/output controls on a single chip. Fourth generation computers also saw the development of GUIs , the mouse and handheld devices.

- Fifth Generation Computers (Present and Beyond)

Fifth generation computing devices, based on artificial intelligence. Are still in development, though there are some applications, such as voice recognition. The use of parallel processing and superconductors is helping to make artificial intelligence a reality. The goal of fifth generation computing is to develop devices that respond to natural language input and are capable of learning and self organization.

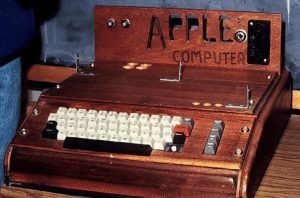

Apple Macintosh

The Amiga

Windows 3

Macintosh System 7

Apple Newton

Standard UNIX

Power PC

IBM OS/2

Windows 95

Top comments (0)